The ancient argument of ‘nature’ versus ‘nurture’ continues to arise in biology. The question has arisen very forcefully in a new book by James Tabery (Tabery 2023). The broad question he examines in this book is the conflict between ‘nature’ and ‘nurture’ in western medicine. In a broad sense ‘nature’ is discussed as the modern push in medicine to find the genetic basis of some of the common human degenerative diseases – Parkinson’s, dementia, asthma, diabetes, cancer, hypertension – to mention only a few medical problems of our day. The ‘nature’ approach to medicine in this book is represented by molecular genetics and the Human Genome Project. The ‘nurture’ approach to treating these medical conditions is via studying health outcomes in people subject to environmental contamination, atmospheric pollution, water quality, chemicals in food preparations, asbestos in buildings, and other environmental issues including how children are raised and educated. The competition over these two approaches was won very early by the Human Genome Project, and many of the resources for medicine over the last 30 years were put into molecular biology which made spectacular progress in diving into the genome of affected people and then making great promises of personalized medicine. The environmental approach to these medical conditions received much less money and was not viewed as sufficiently scientific. The irony of all this in retrospect is that the ‘nature’ or DNA school had no hypotheses about the problems being investigated but relied on the assumption that if we got enough molecular genetic data on thousands of people that something would jump out at us, and we would locate for example the gene(s) causing Parkinson’s, and then we could alter these genes with gene therapy or specific pharmaceuticals. By contrast the ‘nurture’ school had many specific hypotheses to test about air pollution and children’s health, about lead in municipal water supply and brain damage, and a host of very specific insights about how some of these health problems could be alleviated by legislation and changes in diet for example.

So, the question then becomes where are we today? The answer Tabery (2023) gives is that the ‘nature’ or molecular genetic “personalized medicine” approach has largely failed in achieving its goals despite the large amount of money invested because there is no single or small set of genes that cause specific diseases, but many genes that have complex interactions. In contrast, the ‘nurture’ school has made progress in identifying conditions that help decrease the occurrence of some of our common diseases, realizing that the problems are often difficult because they require changes in human behaviour like stopping smoking or improving diets.

All this discussion would possibly produce the simple conclusion that both “nature” and “nurture” are both involved in these complex human conditions. So, what could this medical discussion tell us about the condition of modern ecological science? I think two things perhaps. First, it is a general error to use science without hypotheses. Yet this is too often what ecologists do – gather a large amount of data that can be measured without too much prolonged effort and then try to make sense of it by applying hypotheses after the fact. And second, technology in ecology can be a benefit or a curse. Take, for example, the advances in vertebrate ecology that have come from the ability to describe the movements of individual animals in space. To have a map of hundreds of locations of an individual animal provides good natural history but does not address any specific hypothesis. Contrast this approach with that of Studd et al. (2021) and Shiratsuru et al. (2023) who use movement data to test important questions about kill rates of predators on different species of prey.

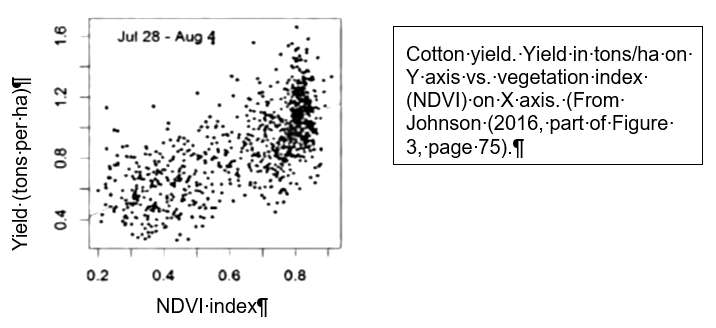

Many large-scale ecological approaches suffer from the same problem as the ‘nature’ paradigm – use ‘big science’ to measure many variables and then try to answer some important question for example about how climate change is affecting communities of plants and animals. Nagy et al. (2021) and Li et al. (2022) provide excellent examples of this approach. Schimel and Keller (2015) discuss what is needed to bring hypothesis testing to ‘big science’. Lindenmayer et al. (2018) discuss how conventional, question-driven long-term monitoring and hypothesis testing need to be combined with ‘big science’ to better ecological understanding. Pau et al. (2022) give a warning of how ‘big science’ data from airborne imaging can fail to agree with ground-based field studies in one core NEON grassland site in central USA.

The conclusion to date is that there is little integration in ecology of the equivalent of “nature” and “nurture” in medicine if in ecology we match ‘big science’ with ‘nature’ and field studies on the ground with ‘nurture’. Without that integration we risk in future another negative review in ecology like that provided now by Tabery (2023) for medical approaches to human diseases.

Lindenmayer, D.B., Likens, G.E. & Franklin, J.F. (2018) Earth Observation Networks (EONs): Finding the Right Balance. Trends in Ecology & Evolution, 33, 1-3.doi: 10.1016/j.tree.2017.10.008.

Li, D., et al. (2022) Standardized NEON organismal data for biodiversity research. Ecosphere, 13, e4141.doi:10.1002/ecs2.4141.

Nagy, R.C., et al. (2021) Harnessing the NEON data revolution to advance open environmental science with a diverse and data-capable community. Ecosphere, 12, e03833.doi: 10.1002/ecs2.3833.

Pau, S., et al. (2022) Poor relationships between NEON Airborne Observation Platform data and field-based vegetation traits at a mesic grassland. Ecology, 103, e03590.doi: 10.1002/ecy.3590.

Schimel, D. & Keller, M. (2015) Big questions, big science: Meeting the challenges of global ecology. Oecologia, 177, 925-934.doi: 10.1007/s00442-015-3236-3.

Shiratsuru, S., Studd, E.K., Majchrzak, Y.N., Peers, M.J.L., Menzies, A.K., Derbyshire, R., Jung, T.S., Krebs, C.J., Murray, D.L., Boonstra, R. & Boutin, S. (2023) When death comes: Prey activity is not always predictive of diel mortality patterns and the risk of predation. Proceedings of the Royal Society B, 290, 20230661.doi.

Studd, E.K., Derbyshire, R.E., Menzies, A.K., Simms, J.F., Humphries, M.M., Murray, D.L. & Boutin, S. (2021) The Purr-fect Catch: Using accelerometers and audio recorders to document kill rates and hunting behaviour of a small prey specialist. Methods in Ecology and Evolution, 12, 1277-1287.doi. 10.1111/2041-210X.13605

Tabery, J. (2023) Tyranny of the Gene: Personalized Medicine and the Threat to Public Health. Knopf Doubleday Publishing Group, New York. 336 pp. ISBN: 9780525658207.